The U.S. government has a new AI framework.

And business leaders should use it to accelerate product development, go-to-market, and other operations safely and securely.

This guidance comes in the form of the National Institute of Standards and Technology (NIST) Artificial Intelligence (AI) Risk Management Framework (RMF) version 1.0. A relatively dense document, it is nonetheless useful for organizations grasping for ways to deploy AI tools responsibly and effectively. NIST also provides an extensive companion playbook with a series of suggested actions for using the AI RMF.

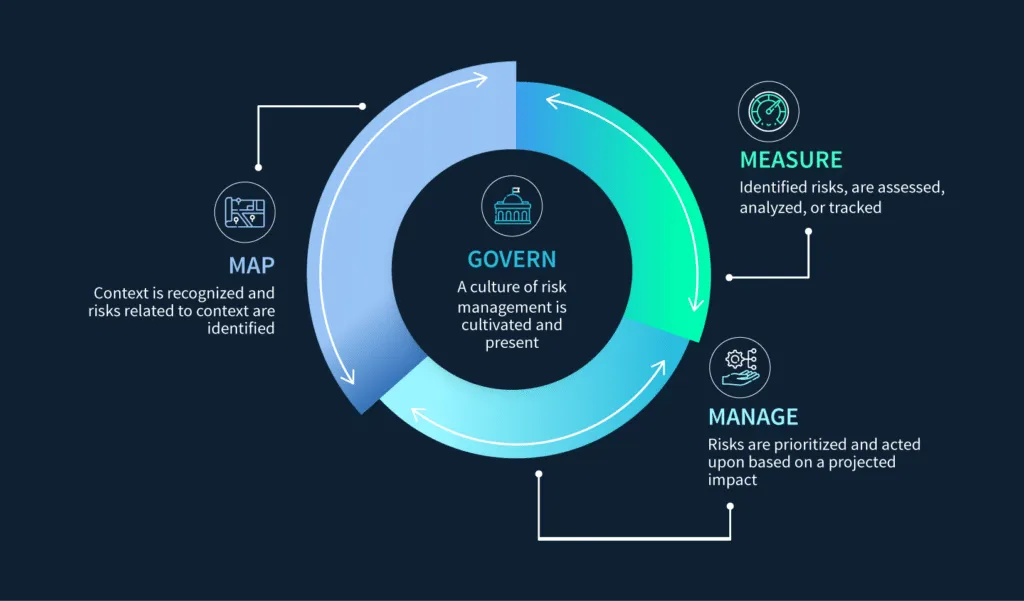

In this post, though, we’ll cut through the fluff. Below are the details you need to plan and start implementing the core RMF functions of GOVERN, MAP, MEASURE, and MANAGE right away.

Risk tolerance: balance costs and benefits

As with any technology, using AI is all about balancing risk. It’s an amazing tool that can supercharge your organization’s productivity and eliminate toil. At the same time, using it can present cybersecurity, privacy, and information risks such as:

- Revealing intellectual property to competitors.

- Accidentally transferring personally identifiable information in violation of regulatory requirements.

- Providing false or misleading information to employees or customers.

The good news, however, is that the NIST AI framework acknowledges this need for tradeoffs. Its stated goal is to help organizations “minimize anticipated negative impacts of AI systems and identify opportunities to maximize positive impacts.”

To determine the optimal balance, AI system owners can establish and document their risk tolerance ahead of time. Business leaders who have accountability for the success or failure of a given initiative are best positioned to do this. With inputs from appropriate subject matter experts (e.g. security, privacy, and legal teams), they should consider factors like the below when making decisions:

- Cybersecurity threats and vulnerabilities

- Cost

- Statutory and contractual obligations

- Ethical considerations

- Competitive dynamics

The deliverable from this process should be a list of applications and data types acceptable for AI use cases. Once your organization has documented this – optimally in an intuitive and version-controlled risk register – you can develop appropriate governance structures.

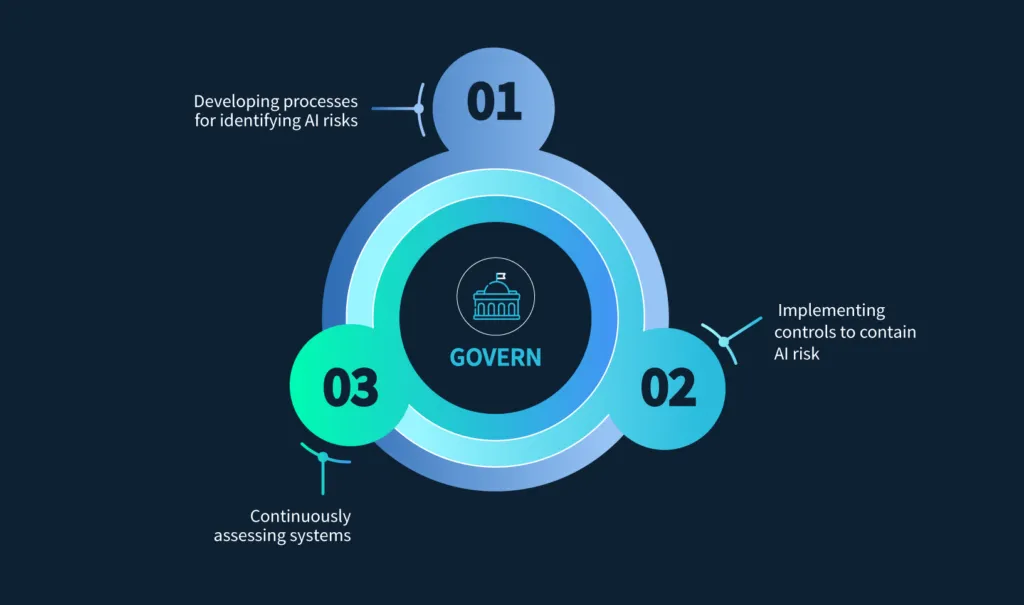

GOVERN: set up your AI program for success

With your risk tolerance established, your next task is to build an organizational foundation for managing the use of AI systems. The GOVERN function includes:

- Developing a culture and process for identifying and tracking AI risks. Especially important is training staff members in the secure, ethical, and compliant use of these tools.

- Implementing controls to ensure AI use remains within the organization’s risk tolerance. In addition to drafting policies, this can include identifying technical controls to prevent the ingestion of sensitive data or monitoring AI tool usage to identify abuse.

- Allowing for continuous reassessment of these systems from technical, cybersecurity, privacy, legal, and ethical angles.

As the scaffolding for a proper risk management approach, governance is an ongoing function that needs regular human reassessment of AI tools and the environment in which they operate.

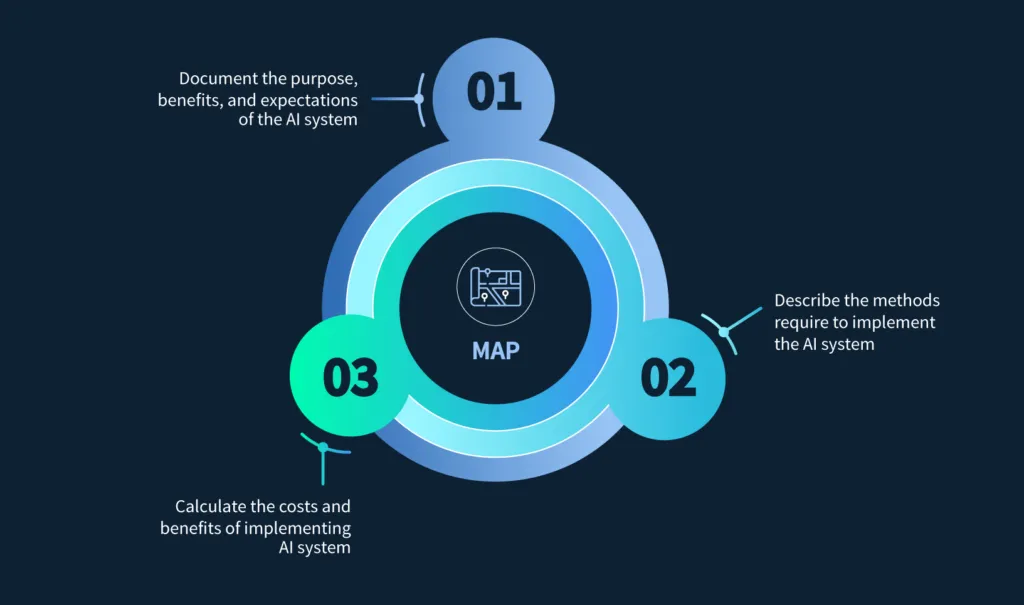

MAP: tying ends to means

The second function – MAP – requires categorizing AI systems, understanding their capabilities and usage, identifying risks and benefits, and characterizing impacts to individuals, groups, communities, organizations, and society.

Here are three concrete steps organizations can take to implement the MAP function:

- Document the intended purposes, beneficial uses, context-specific laws, norms and expectations, and settings relevant to the AI system. For example, a healthcare organization conducting patient data analysis should understand the legal and ethical norms around patient privacy.

- Define specific tasks and methods to implement the AI system. In the case of an e-commerce company providing product recommendations, it should clearly define the tasks the AI system will support, such as analyzing user behavior, predicting preferences, and generating suggestions.

- Evaluate the potential benefits and costs of intended AI system functionality and performance. For example, a financial institution using AI for fraud detection should understand the AI system’s ability to detect suspicious transactions, the money to be saved, and the costs associated with potential errors.

Tying these considerations to specific actions and business processes can be challenging to do manually. Using a platform with an array of integrations that can automatically collect data from different tools and systems can greatly streamline the process.

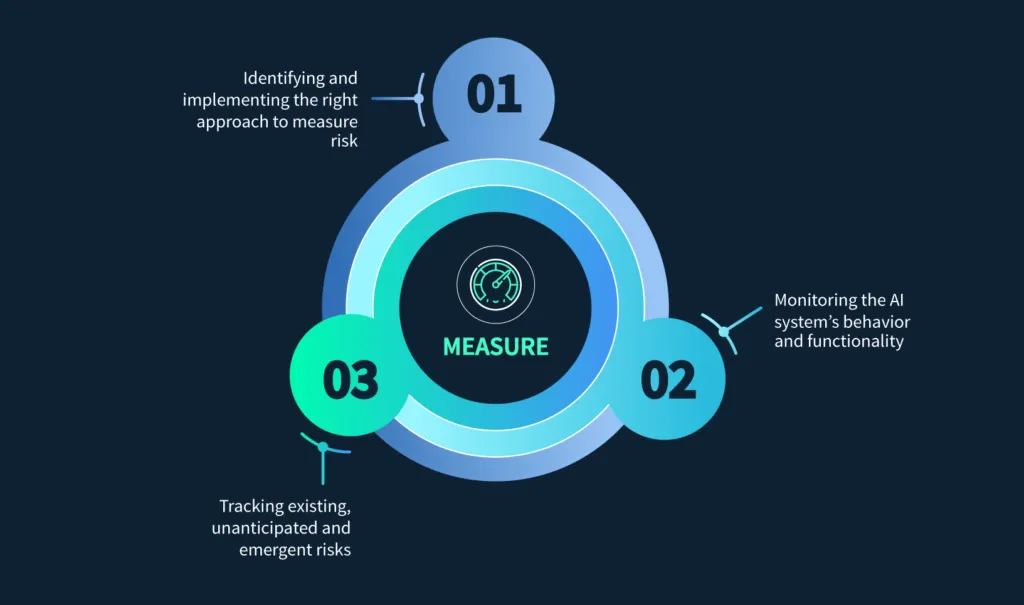

MEASURE: tracking what matters

The MEASURE function requires using quantitative, qualitative, or mixed-method tools to analyze, assess, benchmark, and monitor AI risk. This includes:

- Selecting and implementing appropriate approaches for measuring risk. For example, a company using AI for credit scoring should measure the accuracy, fairness, and transparency of the tool, documenting any risks or trustworthiness characteristics that cannot be measured.

- Monitoring the functionality and behavior of the AI system and its components in production. For instance, a healthcare organization conducting diagnoses should regularly evaluate the AI system’s performance, reliability, and safety as compared to an expert human’s abilities.

- Establishing approaches and documentation to identify and track existing, unanticipated, and emergent risks. A tech company algorithmically moderating content should establish controls to evaluate the system’s impact on bias, privacy, and freedom of speech over time.

Ensuring you have the right tools in place to collect data from AI systems and the correct frameworks for analyzing it from a risk management perspective is key to this step.

MANAGE: aligning, correcting, and optimizing

With the right information, organizations can then prioritize, respond to, and manage AI risks by:

- Based on the output from the MAP and MEASURE functions, prioritizing documented AI risks considering impact, likelihood, and available resources or methods for mitigation. For example, a company using AI for customer service might prioritize risks related to data privacy and system reliability over ease of use, deciding that protecting customer information is more important than having a perfectly seamless experience.

- Mitigating, transferring, avoiding, or accepting risk. A hospital conducting automated diagnosis of disease should develop a plan to mitigate risks related to inaccurate results, such as adding additional human review steps. Alternatively, a video game company might accept the risk of inaccurate decisions made by an AI system designed to detect in-game cheating.

- Regularly applying controls to manage third-party risk. Making sure you aren’t exposed to undue cyber or compliance risk from your supply chain is critical. Including AI-related due diligence as part of vendor reviews and monitoring can help to identify these issues.

Conclusion: accelerating your business with AI, securely

With the self-reinforcing cycle of GOVERN, MAP, MEASURE, and MANAGE, the RMF gives organizations a basic structure for using AI responsibly. As with all compliance frameworks, however, the devil is in the (implementation) details. Mapping each function to concrete actions in the real world is what will actually help to deal with risks appropriately.

Trying to track all of this work in spreadsheets simply isn’t going to cut it, though.

So if you are interested in seeing how the Scrut platform can help you to accelerate compliance with this and other frameworks, schedule a demo now!

The NIST AI Risk Management Framework (AI RMF) is a voluntary framework that helps organizations identify, assess, and manage risks associated with AI systems. It provides guidance for developing trustworthy AI while addressing risks related to cybersecurity, privacy, safety, and ethics.

The NIST AI RMF is built around four core functions: GOVERN, MAP, MEASURE, and MANAGE. These functions guide organizations in establishing AI governance, identifying risks across the AI lifecycle, measuring system performance and trustworthiness, and implementing mitigation strategies.

AI systems can introduce risks such as data privacy violations, exposure of intellectual property, misleading outputs, and cybersecurity vulnerabilities. Implementing a structured risk management approach helps organizations balance AI innovation with security, compliance, and ethical considerations.

The MAP function focuses on understanding the context and intended use of an AI system. Organizations document the AI system’s purpose, identify stakeholders, evaluate potential benefits and risks, and assess the impact of AI decisions on individuals and organizations.

The MANAGE function prioritizes and responds to risks identified during earlier stages. Organizations decide whether to mitigate, transfer, accept, or avoid risks, implement controls, and continuously monitor AI systems to ensure they operate safely and responsibly.

Susmita Joseph is a cybersecurity and compliance writer specializing in governance, risk, and regulatory content. She focuses on making complex subjects such as AI governance, cybersecurity compliance, and risk management accessible to growing and mature organizations. With a particular interest in the intersection of AI and GRC, her work explores how emerging technologies are reshaping compliance expectations and security operations.

Team Scrut is a collective of compliance, security, and risk practitioners sharing practical guidance on building audit-ready, scalable programs. We write about SOC 2, ISO 27001, continuous compliance, third-party risk, cloud security, and GRC automation, blending regulatory depth with operator experience to help fast-growing companies strengthen trust, streamline audits, and stay ahead of evolving security demands.