A late-stage enterprise deal is moving forward smoothly until a familiar question appears in the security review: “Do you use Artificial Intelligence (AI) in your product? If yes, share your governance model and controls.”

What should be a routine step suddenly becomes a bottleneck.

The product team knows AI is already embedded across workflows, the security team assumes controls exist somewhere, and the compliance team starts searching for documentation that was never formally created. There is no clear owner, no structured documentation, and no evidence that can be shared with confidence.

This friction is not hypothetical. It reflects a broader shift in how enterprises are evaluating vendors. Security questionnaires now include AI-specific sections. Procurement teams are flagging vendors who cannot demonstrate model governance, data handling practices, or output monitoring. If you cannot answer those questions clearly and consistently, you get eliminated before the technical evaluation even begins.

BCG's 2024 research found that while 98% of companies are experimenting with AI, only 26% have developed the governance and operational capabilities needed to move beyond pilots and actually generate business value.

This signals a growing expectation from buyers that AI systems are not just used, but governed and controlled.

The gap is not in adoption. It is in proof. AI compliance is not a future regulation problem. It is a current revenue problem.

What does AI compliance actually mean?

AI compliance is often described as adhering to laws, ethical principles, or responsible AI guidelines. While these definitions are accurate, they rarely help teams that are actively building and shipping AI systems.

In practice, it means your organization can answer five questions without guesswork:

- Where are we using AI? You need a reliable inventory of models, AI-enabled features, third-party AI services, internal copilots, and business processes that rely on them.

- What data and decisions are involved? You should know what data flows into the system, whether personal, confidential, or regulated data is involved, and whether the AI output influences user decisions, customer-facing content, or internal operations. This also means understanding output risks, including bias, hallucinations, or unintended model behavior that can create compliance exposure in production, and using tools like an AI text detector where relevant to validate generated content.

- Who owns the risk? Every material AI use case needs a clear business owner, technical owner, and review path. If ownership is fuzzy, compliance fails during reviews because nobody can answer decisively.

- What controls are in place? This includes access control, change management, testing, human review where needed, vendor oversight, and incident response. It also means actively tracking model performance, detecting drift, and surfacing anomalies over time, and not waiting for a customer complaint or audit to reveal a problem.

- What evidence can we show? The final test is whether you can produce records that demonstrate the controls are real, current, and operating as intended.

Why AI compliance is impacting deals today

AI compliance is no longer a distant concern tied to future regulation. It is showing up in immediate, high-impact moments across sales, audits, and internal operations. The shift is subtle, but the consequences are real. And the pattern is consistent across organizations.

You are not failing because of risk. You are failing because you cannot prove control.

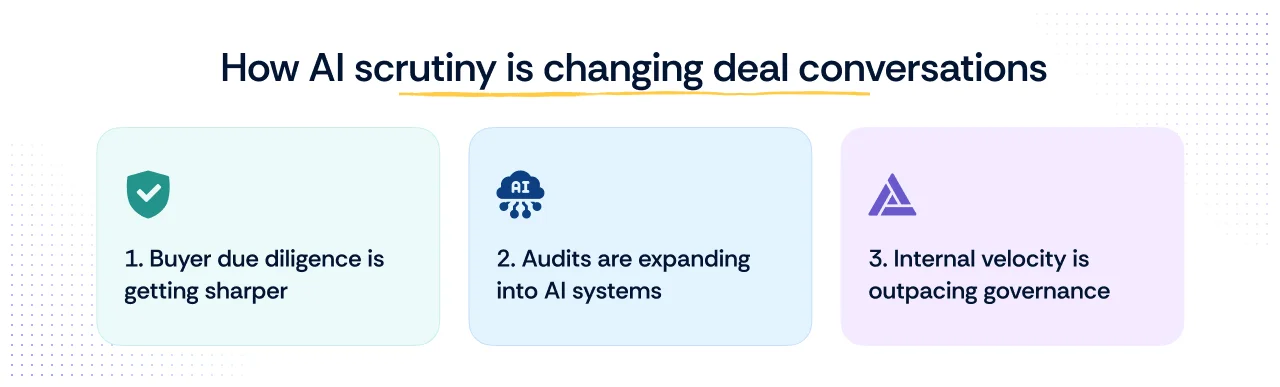

1. Buyer due diligence is getting sharper

Security questionnaires are evolving to include AI-specific questions. Teams are asked to explain where AI is used, how AI models are governed, what guardrails exist, how outputs are validated, and how risks are monitored. When answers are unclear or inconsistent, deals slow down.

In the recent Risk Grustlers Episode “All about compliance commoditization, GRC 4.0 & AI” Nick Muy, in discussion with Aayush Ghosh Choudhury, on how “Smaller companies are not able to access higher quality revenue… they get eliminated very early on in the sales process.”

See how AllCloud turned week-long security questionnaire responses into same-day answers.

Pro tip: Treat ISO 42001 as your AI governance blueprint, not just a certification target. Even before you pursue formal certification, aligning your documentation and controls to its structure gives your team a consistent, auditable foundation that holds up in security reviews.

2. Audits are expanding into AI systems

Audit scopes are evolving. Most companies do not go through a separate AI-specific framework audit first. Instead, AI appears in vendor risk reviews, SOC 2 gap analyses, ISO 27001 control discussions, GDPR assessments, privacy reviews, and board-level risk conversations. The question is no longer whether your frameworks mention AI by name. The question is whether your existing controls still work once AI is added to the workflow.

Auditors are beginning to ask practical questions such as, “How do you validate AI-generated outputs?” or “Do you maintain version control and documentation for your models?” This expands compliance expectations without introducing a completely new framework, which makes it harder to anticipate if you are not prepared.

3. Internal velocity is outpacing governance

AI features are being shipped quickly across products, often without a corresponding increase in governance. Engineering teams prioritize speed and experimentation, while compliance teams are left to document and validate after the fact. This creates gaps in ownership, monitoring, and evidence, especially when multiple teams are involved.

That is why AI compliance is now tied directly to revenue, audit readiness, and trust. The teams that struggle most are usually not reckless teams. They are teams that let AI adoption outpace documentation, control mapping, and evidence collection.

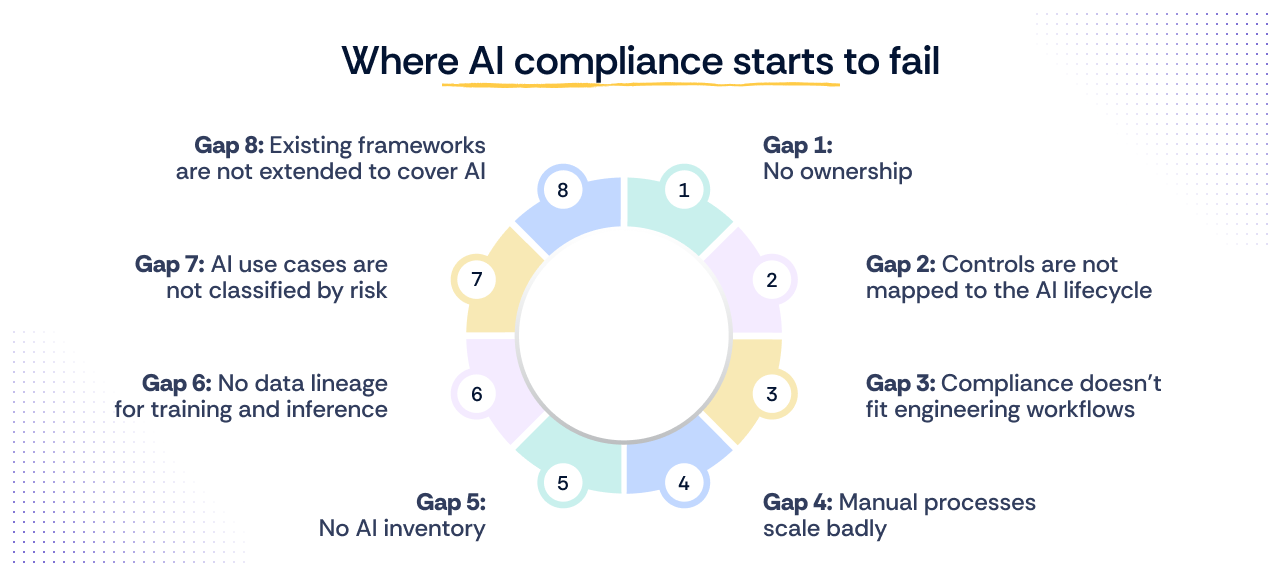

Where AI compliance breaks down in practice

Most teams believe their AI compliance posture is reasonable until someone asks for proof. The gap is rarely about intent. It is about operationalization. The same failure modes show up repeatedly, regardless of company size or how mature the security program is.

Gap 1: No ownership

AI systems span across engineering, data, and security teams, but ownership rarely follows. Engineering builds and deploys models, data teams manage inputs, and security oversees risk. Yet no single owner is accountable for end-to-end AI compliance.

This is a structural problem, not a knowledge problem. The people who build the model are not the people who manage access controls. The people who manage access controls are not the people who handle data privacy. Without an explicit ownership model (who is responsible for each AI system, who reviews it, who signs off on changes), every compliance question triggers a cross-functional scramble.

When governance is not clearly defined at the leadership level, this ambiguity carries into execution and ultimately any security questionnaires or RFPs. Teams spend more time figuring out who should respond to AI-related questions than actually answering them.

AI adoption has scaled faster than accountability structures can keep up with. According to the McKinsey & Company State of AI 2025 report, 78 percent of organizations now use AI in at least one business function. Yet only 13 percent of organizations have hired AI compliance specialists to manage it, meaning the responsibility is falling on security and GRC teams who are already managing a full slate of existing obligations.

Gap 2: Controls are not mapped to the AI lifecycle

Even when controls exist, usable evidence often does not. There are no structured logs of model decisions, no audit trail of prompt or model changes, no human-review checkpoints, no rollback procedures, and no centralized record of how outputs are validated.

Gap 3: Compliance doesn’t fit engineering workflows

Engineering teams think in terms of pipelines, deployments, and access controls, while compliance teams focus on policies and documentation. The result is a disconnect. Policies are often defined separately from how systems actually operate. Controls exist on paper, but they are not embedded into workflows, making AI compliance feel like an external layer rather than part of how the system runs.

A recent example highlights how this gap can create real risk. In March 2023, OpenAI temporarily disabled ChatGPT after a bug exposed users’ chat histories and, in some cases, payment-related information to other users.

While the issue was traced to a technical bug, it exposed a deeper challenge. Systems were operating at scale, but controls around data isolation and visibility were not fully aligned with how the product behaved in production. This is exactly what happens when compliance expectations are not tightly integrated into engineering workflows.

Gap 4: Manual processes scale badly

In the absence of integrated systems, teams fall back on manual processes. Spreadsheets track models, documents capture policies, and approvals move through Slack or email. This creates friction and makes it difficult to scale AI compliance as usage grows.

This is also where shadow AI emerges. When controls are not enforced through systems, employees start using AI tools independently, outside approved workflows, and without visibility.

According to Reco's 2025 State of Shadow AI Report, 71% of knowledge workers use AI without IT approval, and unsanctioned tools run undetected for a median of over 100 days, with some persisting for more than 400 days before security teams discover them. What begins as a productivity shortcut quickly becomes a compliance blind spot that is nearly impossible to unwind once embedded in workflows.

This is what manual compliance looks like at scale. The intent exists, but the system does not support it. Without embedded controls and automated evidence, AI compliance breaks down long before audits or regulators get involved.

Scrut Teammates, Scrut's AI-powered GRC partner, is built to close exactly this gap, automating security questionnaire responses, assessing vendor risk, and verifying audit evidence without manual digging. Explore Scrut Teammates

Gap 5: No AI inventory

You cannot govern what you cannot see. Most organizations have no reliable inventory of where AI is being used, which models are in production, which third-party AI services are embedded in products, and which internal copilots have been quietly deployed by individual teams. Without a current, accurate inventory, every other compliance effort is built on guesswork. Security reviews expose this immediately. When a customer asks,”Where do you use AI?” the answer should not require a cross-functional investigation.

Gap 6: No data lineage for training and inference

AI systems are only as trustworthy as the data flowing through them. Yet most organizations cannot answer basic questions about where their training data comes from, how it was collected, whether it contains personal or regulated information, or how inference data is handled in production. This is not just a compliance gap; it is a liability. Regulators and enterprise buyers increasingly expect organizations to demonstrate data lineage across the AI lifecycle, not just at the point of collection.

Gap 7: AI use cases are not classified by risk

Not every AI use case carries the same risk. A copy assistant for internal marketing is fundamentally different from a model influencing credit decisions or customer-facing recommendations. Without a risk classification system, teams apply the same level of scrutiny, or the same lack of scrutiny, to everything. This means high-risk use cases go ungoverned while low-risk ones get treated as compliance problems. Risk classification is the foundation that determines which controls, which reviews, and which evidence requirements apply.

Gap 8: Existing frameworks are not extended to cover AI

Most organizations have invested heavily in SOC 2, ISO 27001, or GDPR compliance. The assumption is that these frameworks already cover AI systems. In practice, they cover the infrastructure AI runs on, but not the AI itself. Model versioning, prompt change management, output validation, and human review checkpoints are not addressed by existing controls unless teams explicitly extend them. The gap is not in the frameworks; it is in the failure to map AI-specific risks to controls that already exist.

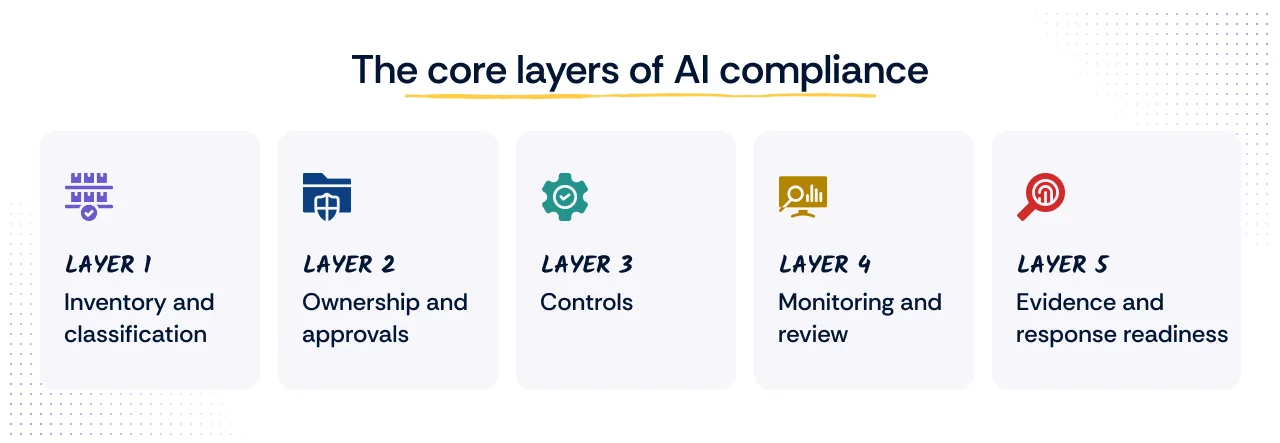

The minimal AI compliance system you actually need

AI compliance can feel overwhelming, especially with evolving regulations and rising expectations from customers and auditors. In practice, most organizations do not fail because they lack controls. They fail because they cannot demonstrate what already exists in a structured, verifiable way.

You do not need a full AI governance program to get started. You need the minimum system that unblocks deals and holds up during security reviews.

As Nicholas Muy, in conversation with Aayush Ghosh Choudhury of Scrut, explains, “It really can boil down to certain simple controls, especially for small and midsize companies.”

The goal is not to build a perfect system. The goal is to build a defensible one. Security is rarely the problem. Evidence is.

To make this practical, break AI compliance into a few essential layers:

Layer 1: Inventory and classification

Start by identifying where AI is being used across your organization. This includes internally developed models, third-party AI tools, and specific use cases such as customer support, recommendations, or internal automation.

For each AI use case, capture the business purpose, system owner, technical owner, data categories involved, user impact, external vendor dependencies, and risk tier. Not every use case needs the same depth of control. A copy assistant for internal marketing is not the same as a product feature influencing customer decisions.

Many teams underestimate this step and only discover gaps during audits. A clear inventory ensures you can answer basic questions like where AI is used, what data it touches, and which systems depend on it.

Layer 2: Ownership and approvals

Once inventory is established, assign clear ownership. Every AI system should have a defined model owner responsible for its operation and a reviewer responsible for oversight. This is critical because AI systems span multiple teams.

Define who can approve a new AI use case, who owns the operation in production, who reviews risk, and who signs off on changes that materially affect data use, model behavior, or customer impact. A lightweight RACI is often enough, but it has to exist.

Without ownership, governance becomes fragmented, and responses to customer or auditor queries become inconsistent. Clear ownership ensures accountability and faster decision-making.

Layer 3: Controls

Controls do not need to be complex, but they must be consistent. Start with access control to define who can modify models, prompts, or configurations. Maintain versioning so that every change is tracked and reversible if needed.

Introduce human output review mechanisms, especially for high-impact use cases, to ensure that results are reliable and do not introduce risk. If you use a third-party model, your controls should cover the integration and data flow, not just the vendor’s marketing claims.

These controls often align with existing compliance requirements, making them easier to implement.

Layer 4: Monitoring and review

Monitoring provides visibility into how AI systems behave over time. At a minimum, log inputs and outputs so that decisions can be traced.

Track model performance and introduce basic anomaly detection to identify drift, unexpected behavior, or failures. For higher-risk use cases, review output quality and safety trends on a schedule instead of waiting for a customer complaint.

Monitoring does not need to be advanced initially, but it must exist. Without it, issues go unnoticed until they surface during audits or incidents.

Layer 5: Evidence and response readiness

Your evidence package should be fast to assemble. That means system logs, approval records, design notes, testing results, policy references, vendor documentation, and exception decisions should be easy to retrieve and easy to explain.

If it takes two weeks and three teams to answer a buyer's question, the system is too manual.

At a minimum, a strong AI-governed compliance program should let you answer four practical questions quickly: what the system does, why it is allowed to do it, what controls govern it, and what evidence proves those controls are current.

See how Athenium gained faster clarity in their SOC 2 journey using Scrut Teammates.

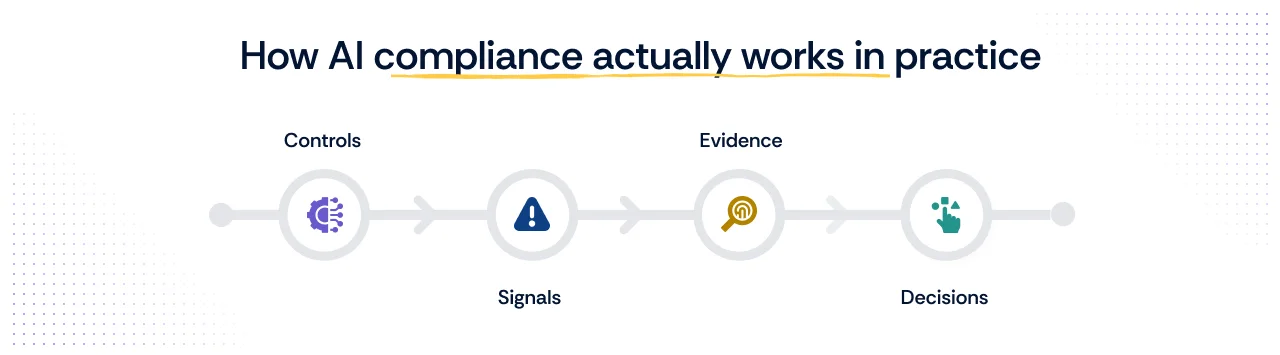

How AI compliance actually works in practice

AI compliance becomes manageable when it is treated as a system rather than a collection of disconnected activities.

At its core, AI compliance follows a simple but powerful flow:

This structure is critical. Without it, teams often fall into reactive patterns, adding tools or controls without clear alignment. As Nicholas Muy puts it, “We shouldn’t be doing random things… there needs to be some kind of structure.”

This is the difference between policy-driven compliance and system-driven compliance. Structure ensures that every control is tied to real system behavior, every signal produces usable evidence, and every decision is based on verifiable data.

Each step builds on the previous one and connects compliance directly to how systems operate in real time.

- Controls

Controls define expected behavior. These include rules such as restricting who can modify models, ensuring training data is handled appropriately, and requiring output validation for high-risk use cases. You define what should happen: who can approve a use case, what testing is required before release, what data is prohibited, what human checks are needed, and what monitoring must be in place.

Controls answer the question: what should happen.

- Signals

Signals represent the data generated by systems during operation. This includes logs of model inputs and outputs, access activity, configuration changes, and performance metrics.

Signals show what is actually happening.

- Evidence

Evidence is created when signals are structured and mapped to controls. Instead of raw logs, teams get organized, audit-ready records that demonstrate whether controls are working as intended.

Evidence answers the question: Can you prove it?

- Decisions

Evidence does not move directly to an audit. Before any formal review, designated owners need to assess what the evidence actually shows. Security, GRC, privacy, and AI system owners review whether controls are working as intended, whether exceptions have surfaced, and what needs remediation before anything is escalated. This human-in-the-loop step is not a formality; it is what separates a defensible compliance posture from one that simply looks good on paper. When this review layer is functioning, audits become faster, approvals become easier, and risk decisions are grounded in verified evidence rather than assumptions.

AI compliance regulations and frameworks that actually matter

AI compliance is often presented as a long list of laws and frameworks. In practice, most organizations do not need to track everything at once. What matters is understanding which regulations directly impact your product, your customers, and your ability to close deals.

EU AI Act

The EU AI Act is the most comprehensive AI regulatory framework today. It follows a risk-based model, where systems are classified into categories such as minimal risk, limited risk, and high risk. The impact is practical.

If your AI system falls into a higher-risk category, you are expected to demonstrate controls around data quality, transparency, human oversight, and monitoring. Even if you are not based in Europe, this regulation applies if you serve EU customers.

US landscape

The United States does not have a single unified AI law, but expectations are evolving through frameworks and regulatory enforcement. Key frameworks and regulations that apply include:

- NIST AI Risk Management Framework (AI RMF): One of the most widely referenced standards, providing guidance on governance, risk measurement, and continuous monitoring of AI systems.

- NIST Secure Software Development Framework (SSDF): Supports secure development practices that can be extended to AI systems, particularly for model deployment and lifecycle management.

- Federal Trade Commission (FTC): Enforces consumer protection laws in the context of AI, shaping regulatory expectations around fairness, transparency, and accountability in AI-driven decisions.

- HIPAA: Applies to AI systems that process protected health information, requiring organizations to maintain data privacy and security controls across the AI lifecycle.

- Financial regulations (SEC, FINRA, CFPB): Sector-specific obligations continue to apply when AI systems are used in financial services, including model risk management and explainability requirements.

- State-level laws: Several US states, including California, Colorado, and Illinois, have introduced or enacted AI-specific legislation that organizations may need to account for depending on where they operate.

Global standards

Global standards are emerging to bring structure to AI compliance. Key standards that apply include:

- ISO/IEC 42001: The world's first international standard specifically for AI management systems. Helps organizations establish governance processes, define accountability, and demonstrate responsible AI use across the lifecycle.

- ISO/IEC 23894: Provides dedicated guidance on AI risk management, helping organizations identify, assess, and treat risks specific to AI systems.

- ISO/IEC 27001: Supports the information security controls that underpin AI systems, covering access management, incident response, and operational security.

- ISO/IEC 27701: Extends ISO 27001 into privacy management, addressing how personal data used in AI systems should be handled, retained, and protected.

- ISO/IEC 42005: Provides guidance on AI system impact assessments, helping organizations evaluate the broader societal and organizational effects of deploying AI.

How AI compliance fits into SOC 2, ISO 27001, and GDPR

A question many security and compliance teams ask is: if we already have SOC 2 or ISO 27001, are we covered for AI? The short answer is partially, but not completely.

Existing frameworks like SOC 2, ISO 27001, and GDPR were not designed with AI systems in mind. They cover the infrastructure AI runs on, the data it processes, and the access controls around it. But they do not address what makes AI uniquely risky: how models are trained, how outputs are validated, how bias is detected, how model behavior changes over time, and how decisions made by AI systems can be explained and challenged.

This is the gap that AI-specific frameworks such as ISO 42001 and the EU AI Act are designed to address.

Here is how to think about the difference:

| AI requirement | Maps to |

|---|---|

| Model access control | SOC 2 access control; ISO 27001 access management |

| Training data handling | GDPR data protection; ISO 27701 privacy controls |

| Logging and monitoring | SOC 2 CC7; ISO 27001 logging and monitoring |

| Model and prompt changes | SOC 2 CC8 change management; ISO 27001 change control |

| Output validation and review | SOC 2 processing integrity; internal quality controls |

What existing frameworks do not cover:

- Risk classification of AI use cases by impact and likelihood

- Model versioning, drift detection, and performance monitoring

- Explainability and transparency of AI-generated decisions

- Human oversight requirements for high-risk AI outputs

- Bias identification and mitigation across the model lifecycle

- Data lineage for training and inference data

Where ISO 42001 and the EU AI Act add value:

ISO 42001 fills the governance gap by providing a certifiable management system specifically built for AI. It provides organizations with a structured way to document AI use cases, assign ownership, implement controls, and produce audit-ready evidence, all of which SOC 2 and ISO 27001 do not require at the AI level.

The EU AI Act goes further by creating legal obligations based on risk. If your AI system falls into a high-risk category, such as systems influencing hiring, credit, healthcare, or law enforcement, you are required to demonstrate conformity with specific technical and governance requirements, regardless of what other certifications you hold.

The practical takeaway is this: SOC 2 and ISO 27001 get you partway there. They provide the security and operational foundation. But if you are deploying AI in customer-facing or high-stakes contexts, or selling to enterprise buyers who are asking AI-specific governance questions, you need to go further. ISO 42001 gives you the structure. The EU AI Act sets the legal floor.

Explainability as a sales accelerator in AI compliance

Explainability in AI compliance means being able to clearly explain how your AI system produces its outputs. It is not about exposing complex algorithms. It is about making decisions understandable to customers, auditors, and internal stakeholders.

This matters directly in audits and enterprise sales. Buyers increasingly ask questions like, “How does your model arrive at this output?” or “Can you justify decisions made by your AI system?” If you cannot answer these clearly, it creates hesitation.

Trust is not built on performance alone. It is built on transparency. As highlighted in industry discussions at Forcepoit AWARE 2025, by Ryan Windham, “Compliance is no longer a check-the-box exercise. It’s now a core pillar of customer trust.”

From an AI compliance perspective, explainability supports both audit readiness and customer confidence. It helps demonstrate that outputs are not random or uncontrolled, but guided by defined logic, reviewed processes, and monitored behavior.

In practice, this does not require complex tooling to start. It requires you to focus on a few essentials, like:

- Maintaining clear documentation of how models are trained and used.

- Ensuring traceability of inputs and outputs so decisions can be followed back to their source.

- Recording changes to models and prompts so outputs can be explained over time.

Explainability turns AI compliance from a black box into something you can defend with confidence.

Moving from AI risk to AI readiness

AI compliance is no longer a future concern that can be addressed later. It is already shaping how customers evaluate your product and how auditors assess your controls. What was once seen as a regulatory discussion is quickly becoming a product readiness requirement.

The shift is subtle but important. You are no longer being asked whether you use AI. You are being asked to prove that it is governed, monitored, and controlled. This is where many teams face friction, not because they lack capability, but because they lack structured evidence.

If you've read this far, you don't need more convincing. You need a starting point. Here are three things you can do this week that will materially improve your AI compliance posture:

1. Run a 30-minute AI inventory session with your engineering leads. Ask one question: "Where are we using AI (built, bought, or embedded) that touches customer data or influences decisions?" Write down every answer. This list is your scope. Most teams discover two to three systems they did not know about.

2. Pick your highest-risk AI system and answer five questions about it. Who owns it? Who can modify it? What data does it access? Are changes logged? Can you produce evidence of any of this? If you cannot answer all five, you now know exactly where your gaps are.

3. Pull your last three security questionnaires and search for "AI." Look at the questions you were asked. Look at the answers you gave. Were they consistent? Were they backed by evidence? If not, those are the questions you need to be able to answer before your next deal enters procurement

Explore how Scrut Automation helps you operationalize AI compliance with continuous monitoring, control mapping, and audit-ready evidence. Take a Scrut Teammates tour to know more about how Scrut uses AI.

AI compliance tools typically fall into three categories based on what they solve. 1. Data governance tools help track data lineage, enforce privacy controls, and manage sensitive information used in AI systems. 2.Model monitoring tools focus on tracking outputs, detecting drift, and identifying anomalies in real time. 3. GRC platforms help you manage governance, risk, and compliance by organising policies, tracking risks, and automating audit evidence in one place. Scrut offers an AI-powered GRC platform that eliminates compliance busywork, prioritises real risk, and streamlines follow-through.

The US does not have a single AI regulation. Instead, organizations rely on frameworks like the NIST AI RMF (National Institute of Standards and Technology AI Risk Management Framework) for structured guidance. The Federal Trade Commission enforces consumer protection laws that apply to AI systems. In addition, sector-specific regulations, such as healthcare and financial rules, apply when AI processes regulated data.

AI explainability in compliance means being able to clearly justify how a model produces its outputs. It ensures that decisions are traceable, understandable, and defensible during audits or customer reviews.

Start with a clear inventory of AI use cases. Assign ownership for each system. Implement basic controls, including access, versioning, and output review. Finally, ensure you can generate evidence through logs and documentation. This structured approach makes AI compliance practical and scalable.

Megha Thakkar is a technical content writer with about a decade of experience in cybersecurity and compliance. She writes extensively on SOC 2, ISO 27001, GDPR, and security operations, helping organizations translate complex requirements into clear, audit-ready decisions. Her work, tailored for CISOs and executive leaders, is frequently cited in U.S. government and NIST publications.

Team Scrut is a collective of compliance, security, and risk practitioners sharing practical guidance on building audit-ready, scalable programs. We write about SOC 2, ISO 27001, continuous compliance, third-party risk, cloud security, and GRC automation, blending regulatory depth with operator experience to help fast-growing companies strengthen trust, streamline audits, and stay ahead of evolving security demands.